Jiayi (Chloe) Lu

Product Manager | Digital Creator | Life Explorer

social

About Me

Chloe is a distinguished professional in the fields of product management and UX design, currently residing in Vancouver, British Columbia. Her expertise lies in crafting unique digital experiences and strategies that cater to a diverse range of businesses and users.

Chloe's journey into the digital design world is marked by a deep-seated passion for solving complex user experience puzzles. With a solid background in project management, she has honed her skills in resource allocation, roadmap management, and innovative problem-solving, consistently translating these into successful project outcomes. Her proficiency spans working with both budding start-ups and established enterprises, reflecting her adaptable nature.

The educational foundation that Chloe has built underpins her abilities as a creative problem solver. As a certified User Experience Designer, she continuously seeks opportunities for growth and embraces new challenges in the ever-evolving digital landscape. Her commitment to inclusive design showcases her dedication to crafting experiences that resonate with end-users from all walks of life.

Professionally, Jiayi Lu (Chloe) is consistently eager to take on projects that allow her to contribute her skills to the burgeoning world of digital innovation. She is open to engaging in both freelance opportunities and long-term collaborations, ensuring a tailored approach to each unique challenge. Her fluency in Mandarin, Cantonese, and English enables her to operate seamlessly across different cultural contexts, adding a valuable dimension to her professional outreach.

Jiayi Lu’s dedication to her craft, matched with her creative prowess, positions her as a reliable partner for those seeking to enhance their digital presence. Whether it's through dynamic UX design or strategic product management, Chloe is committed to making a meaningful impact in the digital realm.

Message me

The Ethics of Speed: How Product Managers Hold the Line in AI Development

There is a particular kind of pressure that lives in the space between a sprint deadline and a nagging instinct that something is not quite ready. In AI product development, that pressure is not just common. It is constant. The culture of fast shipping has always been a defining feature of tech, but AI has raised the stakes in ways that make speed a genuinely ethical question, not just a strategic one.

Product managers sit at the center of that tension every single day. They are expected to move fast, to ship, to demonstrate progress. And yet they are also the people most responsible for asking whether something should ship at all, and what happens when it does.

## Why Speed Feels Like the Default

There is a reason teams default to moving fast in AI product development. The market moves quickly, competitors are always shipping, and investors and stakeholders want to see momentum. In SaaS specifically, the pressure to iterate at speed is baked into the culture.

But with AI-native products, speed carries a different kind of risk. A poorly designed feature in a traditional SaaS product might frustrate a user or create a support ticket. A poorly designed AI feature can erode trust in ways that are much harder to recover from. It can produce outputs that mislead users, reinforce bias, or make decisions that users did not consent to and cannot easily contest.

The case for moving fast is real. The risks of moving carelessly are also real. The product manager's job is to hold both truths at once and navigate between them with intention.

## What Responsible Shipping Actually Looks Like

Responsible shipping is not about slowing everything down or adding friction for its own sake. It is about building the discipline to ask better questions before something goes out the door.

In practice, that starts with clarity around what the AI feature is actually doing. Not at a technical level, necessarily, but at a user impact level. Who is affected by this output? What happens when the model is wrong? Is there a way for users to understand or contest what the AI has decided? These are not abstract ethics questions. They are product questions, and they belong in the same conversation as timelines and acceptance criteria.

It also means being honest about what is and is not known at the point of shipping. AI products are probabilistic by nature. They do not behave the same way every time. Responsible shipping means having a clear-eyed view of where uncertainty lives in the product and communicating that honestly, both internally and to users.

## The PM as the Person Who Pumps the Brakes

There is an uncomfortable truth in AI product development: often, the product manager is the only person in the room whose job it is to slow down and ask whether something is ready. Engineers are optimizing for code quality. Designers are optimizing for experience. Executives are optimizing for timelines. The PM is the person who has to hold the line on all of those dimensions simultaneously, including the one that asks whether this is responsible to ship right now.

That is a hard position to be in, especially in a fast-moving culture where pumping the brakes can feel like blocking progress. But that instinct to pause is not a weakness. It is one of the most valuable things a product manager can bring to an AI team.

The challenge is developing the judgment to know when that instinct is right and the courage to act on it when it is. That judgment comes from having a clear set of principles that guide decision-making, not just a vague sense that something feels off. It comes from understanding the user deeply enough to anticipate downstream consequences. And it comes from building the kind of credibility with stakeholders that makes it possible to raise a concern and be heard, rather than dismissed.

## Building a Culture Where Responsible Shipping Is the Norm

Individual judgment matters, but it is not enough on its own. The most durable version of responsible AI shipping happens when it is built into how a team operates, not just when a single PM sounds the alarm.

That means making space in the product process for the questions that often get skipped when timelines get tight. It means building lightweight frameworks that make it easier to evaluate readiness across both functional and ethical dimensions. It means treating post-launch review as a genuine learning opportunity, not just a formality.

It also means talking openly about failures when they happen. AI products will occasionally ship something that does not behave as intended. The teams that handle that well are not the ones that avoid it. They are the ones that have built the processes and the psychological safety to surface problems quickly, respond honestly, and iterate with accountability.

In SaaS specifically, where user trust is a core part of the value proposition, that kind of culture is not just the right thing to build. It is a competitive advantage.

## Practical Anchors for Fast-Moving Teams

There are a few questions worth building into any AI product review, regardless of how tight the timeline is.

- What is the worst-case output this feature could produce, and what is the plan if it happens?

- Do users understand what the AI is doing and why?

- Is there a meaningful way for users to provide feedback or contest an AI-generated result?

- What does "good enough to ship" actually mean for this specific feature, and who has agreed on that definition?

None of these questions require a lengthy ethics review or a separate governance process. They can be asked in a standard sprint review or a pre-launch checklist. The goal is not to add bureaucracy. It is to make sure that the conversation happens before the feature goes live, not after something goes wrong.

## The Line Is Worth Holding

Speed and responsibility are not opposites. The best AI product teams find ways to move quickly and ship thoughtfully, because they have built the systems, the culture, and the shared language to do both at once.

But when those two things genuinely conflict, when the timeline is real and the feature is not ready, someone has to hold the line. That is the product manager's job. Not because it is comfortable, and not because it always makes the PM popular. But because no one else in the room is positioned to do it with the same combination of user empathy, business context, and cross-functional accountability.

The ethics of speed in AI product development will only become more urgent as AI capabilities grow and deployment cycles accelerate. The product managers who develop the judgment, the courage, and the frameworks to navigate that tension well will not just be better PMs. They will be the ones shaping what responsible AI actually looks like in practice, one product decision at a time.

Stop Asking If AI Is Good Enough and Start Figuring Out How to Work With It

There is a conversation happening across the tech industry, in Slack channels, over coffee, and in the group chats of people who work in product, engineering, and design. It goes something like this: "Is AI actually good enough yet?" or "Our team tried it and it wasn't that impressive." The question is understandable. But it might also be the wrong one entirely. The more time spent in rooms, virtual or otherwise, with peers navigating this shift, the clearer it becomes: the teams moving forward are not the ones waiting for AI to prove itself. They are the ones figuring out how to work alongside it.

## The Mindset Gap Is the Real Problem

Spend time talking to enough people in this industry and a pattern starts to emerge. Professionals across different company sizes and structures are still approaching AI from a place of skepticism or uncertainty, asking whether the tools are good enough rather than asking how the work itself needs to change. That distinction matters enormously.

It is easy to understand why. Doubt is a reasonable response to any significant shift. The noise around AI has been loud, and not all of it has been grounded. But there is a difference between healthy skepticism and staying stuck in a defensive posture while the ground shifts beneath you. The former keeps you sharp. The latter keeps you behind.

What tends to go unexamined is this: AI is not just a new category of tooling. It is changing how teams collaborate, how workflows are structured, and how decisions get made. That is a fundamentally different kind of change than upgrading software or switching project management platforms. It asks something more of the people using it, a willingness to rethink assumptions about how work gets done, not just which tool gets opened in the morning.

The more productive question is not "does this AI do its job well enough?" It is "what does our way of working look like when AI is genuinely part of the team?"

## What Was Assumed Before Is Not the Golden Rule for the Future

One of the most visible places this plays out is in team structure. For a long time, certain team configurations were treated as standard. Cross-functional teams of a certain size, with a certain mix of roles, were considered the baseline for shipping a good product. That assumption is worth revisiting.

Working inside a small, high-output startup team makes this especially clear. When every person wears multiple hats and AI tools are woven into the workflow, it becomes possible to move at a pace and with a breadth of capability that would have required a significantly larger team just a few years ago. Workflows that once needed dedicated headcount can be partially automated. Gaps that used to require a specialist hire can be bridged. The definition of what a "complete" team looks like is genuinely shifting.

This does not mean traditional team structures are obsolete. Large organizations, particularly those in highly regulated or legacy-heavy industries, cannot restructure overnight. Many are still in the middle of digital transformation efforts that began years ago, and AI is arriving as a second wave before the first has fully settled. Healthcare systems still running on paper-based processes, government agencies navigating decades-old infrastructure: these are real constraints, not failures of imagination.

The honest answer is that it depends on the organization. What works for a pre-seed startup will not map cleanly onto an enterprise with thousands of staff and deeply embedded processes. The insight worth holding onto is not that one model is better, but that the old defaults should be questioned. What was assumed before is not necessarily the golden rule anymore.

## Picking Battles and Iterating Forward

For product managers sitting inside slower-moving organizations, this raises a practical and often uncomfortable question: how do you push for meaningful change without burning trust or burning out?

The answer is not to arrive with a transformation agenda and a timeline. It rarely works, and it often creates resistance where none existed before. A more grounded approach is to start by learning how existing teams work and how they collaborate. Before advocating for anything new, understand the current state. What is the foundation already in place? Where is the friction? What are people already trying to solve?

From that foundation, change can be introduced iteratively, the same way a product gets refined after launch. Not everything needs to be pushed at once. Some workflows are worth modernizing with urgency. Others can wait. Picking the right battles means understanding which changes will have the most meaningful impact with the least organizational disruption, and starting there.

This is where a product iteration mindset becomes genuinely useful beyond the product itself. Teams are not so different from products in this sense. They have their own version of a roadmap, a backlog of friction points, and a learning loop. The more a team runs together, the clearer it becomes what works and what needs to change. Iterate from there. Let the team's own experience be the data.

The trap to avoid is holding the past up as a standard. Not because the past was wrong, but because the context has changed. The tools have changed. The expectations have changed. Anchoring too tightly to how things were done before makes it harder to see what is possible now.

## What This Moment Actually Asks of Us

Navigating an era of layered transformation, digital and now AI-driven, requires something that does not show up in any tool tutorial or framework doc. It requires the willingness to stay genuinely curious about how work is evolving, to hold open questions with patience rather than defaulting to resistance, and to recognize that the people figuring this out in real time are not the ones with the loudest opinions about AI. They are the ones quietly adapting, iterating, and building something that works for their specific context.

There is no single answer to what the new way of working looks like. But there is a common thread among the teams and individuals moving with clarity through this period: they have stopped asking whether AI belongs in the room, and they have started figuring out how to make the room work better because it is there.

That shift, from doubt to design, is where the real work begins.

Building User Trust in AI-Powered SaaS: What Product Managers Need to Understand

There is a moment every product manager working in AI eventually faces. The feature is built, the data looks promising, and the rollout plan is solid. But the users? Some of them are not convinced. Some are watching from a distance. Others have already made up their minds that this is not for them. That tension between what a product can do and what users are willing to trust it with is one of the most underexplored challenges in AI product development right now.

Trust in AI-powered products is not a binary switch. It does not simply turn on when the product is good enough. It builds, slowly, through a mix of experience, context, and perception. And for product managers, understanding the shape of that trust journey is just as critical as shipping the feature itself.

## The Spectrum of Skepticism (and Why It Is Actually a Good Sign)

Spend any time in product communities, online forums, or social spaces where creators, operators, and professionals gather, and a pattern becomes clear. When it comes to AI, people do not land in one place. There are the early movers who adopt fast and advocate loudly. There are the experimenters who are genuinely curious but cautious. There are the observers who are watching carefully before committing. And then there are the skeptics who are holding firm, unconvinced.

What is easy to miss is that this spectrum is not a problem. It is a signal. Each of these groups is telling a product team something important about what trust actually requires, and where it is still missing.

The skeptics, in particular, are worth paying close attention to. They are not simply resistant to change. Many of them have legitimate concerns, about quality, about authenticity, about what it means for their craft or their workflow. A copywriter who pushes back on an AI-assisted writing product is not necessarily being closed-minded. They may be asking a question the product has not yet answered: will this make my work better, or will it make me replaceable?

That question matters. And the product managers who treat it as friction to overcome, rather than a signal to understand, tend to build products that win early adoption but struggle with lasting trust.

## What Actually Shifts Someone from Skeptic to Advocate

The more interesting question is not why people are skeptical. It is what changes their mind. And it is rarely a single moment or a clever marketing message. It tends to be something slower and more personal.

Consider the arc of a skeptical user who eventually comes around. What often happens is not that they were convinced in a conversation. It is that they had an experience, however small, that reframed what the product meant to them. Maybe the output was better than they expected. Maybe it saved them time in a way they could not ignore. Maybe a peer shared a result that shifted their reference point. Over time, repeated exposure to something that genuinely works in their context has a compounding effect on perception.

This has real implications for how AI-powered products are built and positioned. Features that generate impressive demos are not the same as features that build trust over time. Trust comes from consistent, contextually relevant value delivered in ways that respect how the user already works. It comes from transparency about what the AI is doing and what it is not doing. It comes from giving users enough agency that they feel like partners in the process, not passengers.

For product managers, this means the launch is not the finish line. The post-launch period, where real users are forming real impressions in real workflows, is where trust is actually built or lost. That is the phase that demands the most attention, and the most humility.

## The Product Manager's Role in Shaping the Trust Environment

There is a tendency in product circles to frame trust as a UX problem or a communications problem. Write better onboarding copy. Add an explainability layer. Run a trust-building campaign. These are not wrong, but they are incomplete. Trust in AI products is also a product strategy problem, and it starts long before the first user ever logs in.

The decisions made during development, about what data the model uses, how outputs are surfaced, what controls users have, and how errors are handled, all shape the trust environment users walk into. A product that is opaque about how it reaches a result, or that overclaims what it can do, is setting up a trust deficit that no amount of onboarding can fix.

This is where the product manager's role becomes particularly significant. Sitting at the intersection of technical capability, user needs, and business goals, a PM has the rare ability to ask the questions that others might not think to ask. What happens when the AI gets it wrong? What does the user experience in that moment, and does it make them more or less likely to try again? Is the product being honest about its limitations, or is it papering over them with confident-sounding language?

These are not just ethical questions, though they are that too. They are strategic ones. Products that earn trust by being transparent and genuinely useful in imperfect moments tend to build the kind of loyalty that is very hard to replicate. Products that overpromise and underdeliver in ways that feel invisible tend to erode trust quietly, until users simply stop engaging.

The AI content landscape is still relatively new. There are users at every stage of the adoption curve, and there are still vast portions of the market that have not yet formed a firm opinion. That is an enormous opportunity, but it is also a responsibility. The habits and norms being established right now around how AI products earn trust will have a long tail.

## Trust Is a Product, Not a Promise

At the heart of all of this is a simple but easy-to-forget idea: trust is not something that gets communicated into existence. It is built through what a product actually does, repeatedly, in the hands of real people with real stakes.

The most durable AI products will not be the ones with the flashiest features or the most aggressive launch strategies. They will be the ones built by teams that took the full spectrum of user skepticism seriously, that designed for the observer as thoughtfully as for the early adopter, and that understood trust as a long-term outcome worth engineering for.

That is the work. And for product managers working at the intersection of AI and human experience, it may be the most important work of all.

From AI Hype to Reality in SaaS: A Product Manager’s Playbook for Grounded, Responsible Implementation

AI has become the easiest feature to sell and the hardest capability to ship well. In SaaS, the hype arrives fast: every workflow promised an assistant, every dashboard promised automation, every product roadmap promised transformation. Yet users rarely ask for “more AI.” They ask for fewer headaches, clearer decisions, faster outcomes, and fewer moments where software feels unpredictable. Add one more copy.

The gap between hype and reality is not a technology gap. It is a product discipline gap. The teams that win with AI are not the ones that talk the loudest about models. They are the ones that stay grounded in user pain, supervise outputs like a responsible operator, and keep the product humble enough to learn.

What “AI hype” looks like in SaaS product work

AI hype is not excitement. Excitement is healthy. Hype is what happens when expectations detach from constraints.

In practical SaaS terms, hype usually shows up in three ways.

Promise inflation

Features are positioned as “magic” instead of scoped capabilities. AI is treated like a universal solution rather than a tool that needs clear boundaries, quality data, and human review.

Capability confusion

Stakeholders assume AI can reason like a domain expert across messy edge cases. In reality, many AI systems produce plausible outputs that may be incomplete, inconsistent, or wrong in subtle ways.

Responsibility drift

When AI is framed as autonomous, accountability gets blurry. Users are left wondering whether to trust the product, and teams struggle to define what “good” looks like beyond demos.

Hype becomes dangerous when it shapes roadmaps around narrative instead of need. The fastest way to lose trust is to ship an AI feature that behaves confidently while failing quietly.

The foundational skill: stay grounded and supervise the output

A useful mental model for AI in SaaS is this: the product is still responsible for outcomes, even if the model produces the text, prediction, or recommendation.

That is why supervision is not optional. It is core product craft.

Supervision includes:

- Designing workflows where users can review, correct, and override outputs

- Making uncertainty visible when it matters, rather than hiding it behind confident language

- Treating AI results as drafts, suggestions, or signals, depending on the use case

- Instrumenting quality, not just usage

Grounding is the companion skill. The most fundamental part of product design and development still applies: stay humbled by user needs and user pain points. AI does not replace discovery. It raises the cost of skipping it.

When teams chase AI for its own sake, they start optimizing for novelty. When teams ground in reality, they optimize for usefulness.

“Go touch some grass”: why emotional intelligence is a product advantage

There is a modern temptation to build AI-native products from abstractions: datasets, prompts, dashboards, and internal assumptions about what users should want. That is how products drift.

A more durable approach is simple and surprisingly rare: be out in the community. Know who is being built for. Engage with them. Talk to them. Listen past the first answer.

This is where emotional intelligence becomes a strategic advantage, not a soft skill.

Emotional intelligence in AI product management shows up as:

Seeing the world from a different perspective

Users do not experience “model quality.” They experience friction, risk, and confidence. A feature that looks impressive in a demo can feel unsafe in a real workflow.

Detecting when users are signaling discomfort

In AI experiences, hesitation is data. If users keep checking, rechecking, or avoiding an AI feature, the product is communicating unreliability, even if metrics show initial adoption.

Keeping teams humble

Human interaction has a way of removing the fantasy from product narratives. It forces prioritization around what matters, not what sounds visionary.

The phrase “go touch some grass” captures a practical truth: AI product managers cannot stay credible while living only in roadmaps and model outputs. Real insight comes from real contexts.

A practical playbook for responsible AI implementation in SaaS

Responsible AI does not require perfection. It requires discipline, clarity, and an honest relationship with risk. The following practices help product teams move from hype to outcomes.

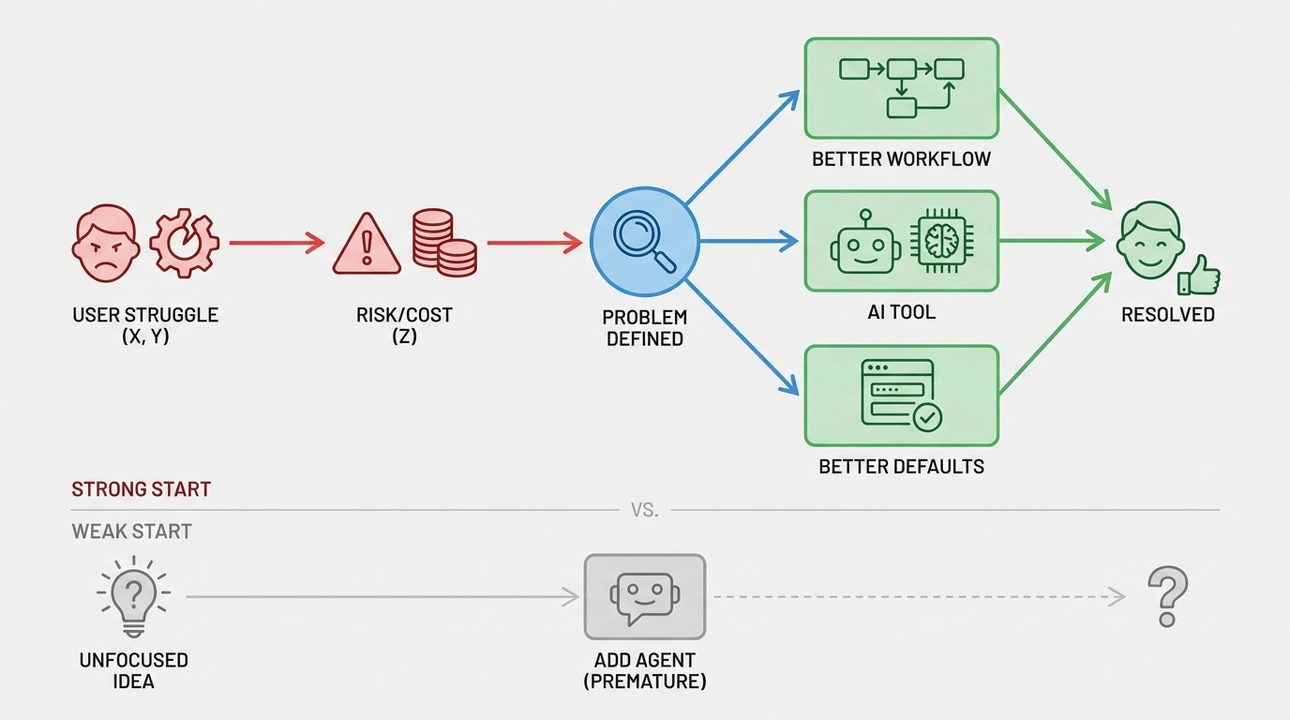

1. Start with a user pain statement, not an AI capability statement

A strong starting point sounds like:

“The user struggles to do X because Y, which creates risk or cost Z.”

A weak starting point sounds like:

“We should add an agent.”

When the pain is clear, AI becomes one of several possible tools. Sometimes AI is right. Sometimes a better workflow, better defaults, or better data entry removes the need.

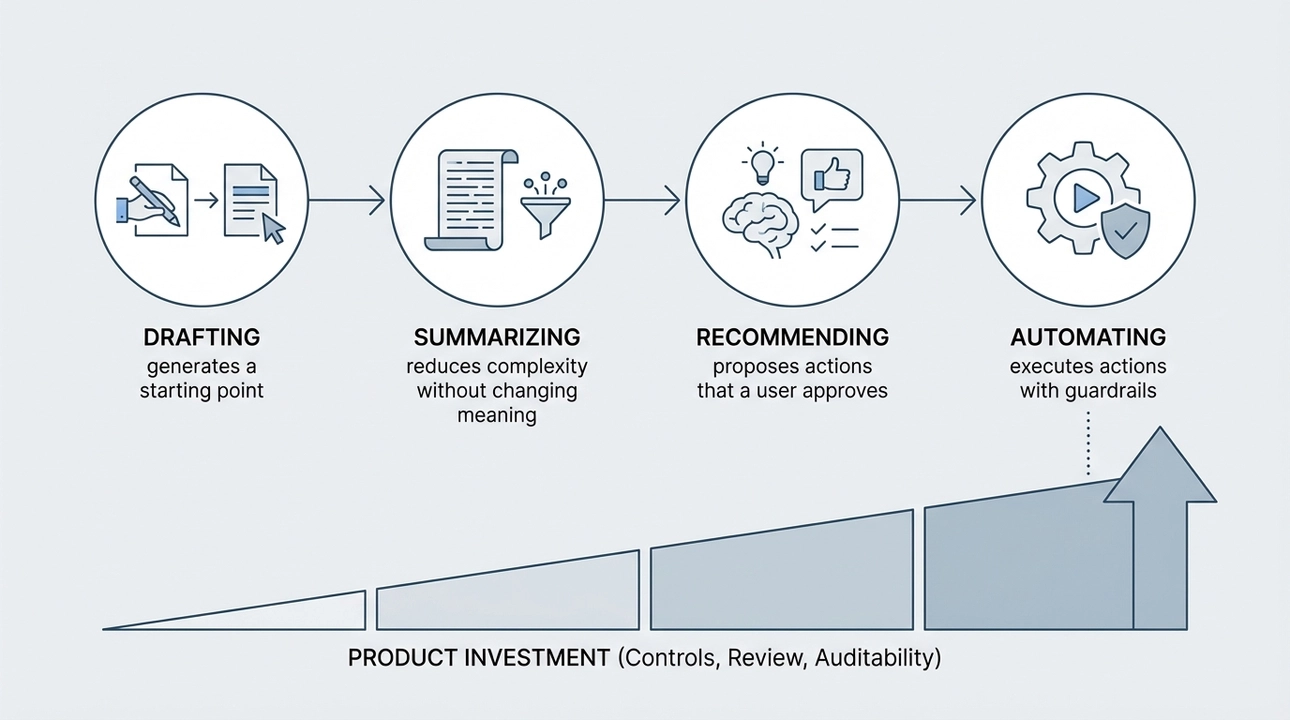

2. Define the role of AI in the workflow

AI can play different roles, and each one implies different product requirements.

- Drafting: generates a starting point that the user edits

- Summarizing: reduces complexity without changing meaning

- Recommending: proposes actions that a user approves

- Automating: executes actions with guardrails

The more the AI acts, the more the product must invest in controls, review steps, and auditability.

3. Build supervision into the UX, not into a policy doc

Users need simple, native ways to steer the system.

- Clear affordances to accept, edit, or reject

- Lightweight feedback that improves future outcomes

- Explanations that focus on what the system did, not how impressive it is

If the only safety mechanism is “users should be careful,” the product has not done its job.

4. Measure quality as a product metric, not a research metric

Adoption metrics alone can be misleading in AI. Curiosity drives clicks. Trust drives retention.

Quality can be tracked through:

- Edit rate: how often users rewrite AI output

- Override rate: how often users reverse AI recommendations

- Time to completion: whether the feature reduces real work

- Escalation signals: support tickets, retries, abandonments

The goal is not to prove the model is smart. The goal is to prove the workflow is reliably helpful.

5. Keep the product honest about uncertainty

Many AI failures are not caused by a wrong answer. They are caused by the product presenting a guess as a fact.

Responsible SaaS experiences respect user judgment by:

- Avoiding overly confident system voice in high risk contexts

- Making it clear when outputs are suggestions

- Encouraging verification when consequences are meaningful

Trust is built when the product behaves like a careful partner, not a performative expert.

The real differentiator: a humble product that learns

The market will keep rewarding AI narratives in the short term. But users reward reliability.

The most resilient AI SaaS products will be the ones that:

- Stay anchored to human needs and real environments

- Treat AI outputs as supervised contributions, not authority

- Use emotional intelligence to detect friction that metrics miss

- Iterate with humility instead of defending the first implementation

In practice, this means teams spending less time arguing about whether a feature is “AI-native” and more time asking whether it reduces a real burden for a real user.

A final note on building what deserves trust

AI makes it easier to ship something that looks finished. It also makes it easier to ship something that should not be trusted.

The teams that move from hype to reality will not be defined by the models they choose. They will be defined by the discipline they keep: staying grounded, staying close to users, and supervising what gets shipped into someone else’s workflow.

The most useful reminder is also the simplest. Go back to reality. Go meet the people the product is meant to serve. Then build the AI that fits into their world, not the AI that looks good on a slide.

How I Position Myself as a Learning Product Manager in Conversational AI: Strategies, Examples, and Leadership Lessons

## Opening introduction

I remember the moment I decided to stop pretending I had to know everything and start leaning into being a learner. Conversational AI moves fast, and the product questions are rarely binary. As a product manager who wants to lead in this space, I chose a different ambition than claiming expertise overnight. I committed to a visible, repeatable learning practice that builds credibility, drives better products, and earns influence across teams.

This piece is for product people who want to position themselves as learning leaders in conversational AI. I write from experience building products at a small company where I wore multiple hats, and from countless experiments that taught me how to turn curiosity into measurable outcomes. If you are navigating ambiguous model behavior, shifting UX expectations, or the politics of deploying new AI features, these are the practical approaches I use to grow, lead, and ship with confidence.

## Why a learning identity matters in conversational AI

Conversational AI is not a mature feature you can spec once and forget. Models change, user expectations evolve, and new failure modes appear as you scale. Claiming expertise without continuous learning is a liability. Positioning yourself as a learner does three things that matter for leadership.

First, it signals intellectual humility. When you admit the unknowns and frame experiments to reduce them, people trust your judgment more than when you make definitive promises. Second, it accelerates discovery. A learning posture forces you to run small, fast experiments that reveal what truly moves the needle. Third, it creates durable influence. Teams follow leaders who can synthesize new evidence, update strategy, and provide a clear path forward.

A concrete example: early in a conversational assistant project, our team faced mysterious drops in task completion after a model update. Claiming the problem was purely model quality would have delayed the fix. Instead, I organized a rapid root cause study that combined qualitative call transcripts, session replay, and a few controlled A B tests. We found the dominant issue was a small UX regression that amplified ambiguities in the new model. Framing the effort as learning allowed us to prioritize correctly and communicate tradeoffs to stakeholders.

## How I structure my learning practice

Learning without structure becomes noise. I use a repeatable framework to collect evidence, synthesize insights, and turn them into actions.

Research cadence

- Weekly signal review. I set aside time every week to review fresh qualitative and quantitative signals: new failure cases, user quotes from support, and changes in key metrics.

- Quarterly deep dives. Every quarter I run a focused research sprint on one hypothesis about user behavior, intent distribution, or safety edge cases.

Experiment design

- Start with a crisp question. What specific assumption will this experiment confirm or refute? Rather than asking if the assistant is faster, I ask if clarifying prompts increase successful task completion for intents with >10 percent ambiguity.

- Define a minimal intervention. The simplest change that could shift the metric might be a prompt tweak, a small UX affordance, or a reranking rule. I prefer incremental interventions that are cheap to deploy and easy to interpret.

- Predefine success criteria. I avoid vague outcomes. Success is a numeric improvement in a specific metric or a reduction in a documented failure class.

Evidence synthesis

I keep a living document that captures patterns across experiments. Every new learning is mapped to one of three outcomes: contradicts a prior assumption, refines how we implement an idea, or validates the approach and suggests scaling. That mapping helps me prioritize next steps and communicate recommendations succinctly.

Tools and signals I rely on

- Conversation transcripts and thematic coding. Reading raw transcripts is time consuming but indispensable for surface-level failures.

- Session replay and funnel analytics. Where do users drop? Which utterances precede resets or handoffs?

- Small-scale A B tests. Even within 5 percent of live traffic, controlled changes can reveal meaningful shifts.

- Error taxonomies. I maintain a short list of recurring error types so experiments target the highest-leverage areas.

## Turning learning into product decisions and leadership

Learning becomes leadership when it influences others' decisions. Here are the practices I use to translate my experiments into impact.

Narrative, not just data

Numbers matter, but narratives move teams. I pair metrics with a short narrative that explains the user problem, the experiment, and the recommendation. A good narrative answers three questions: why this matters now, what we tested, and what we recommend next. That format makes it easy for engineers and executives to take action.

Designing for stakeholder adoption

When I want a change to stick, I think about the smallest policy or UI change that shifts behavior. For example, when model hallucination was a recurring issue, rather than pushing for a wholesale model swap, I proposed a selective guardrail for intents that could trigger verifiable responses. This compromise reduced risk while proving the approach.

Building a culture of experiments

You cannot be the only learner. I make learning visible by sharing quick postmortems, hosting a show-and-tell at sprint reviews, and creating a shared repository of micro-experiments. That lowers the bar for others to try hypotheses and builds momentum.

Mentorship through curiosity

As a learning product manager, I mentor colleagues by modeling how to ask better questions, design clearer experiments, and interpret ambiguous results. Teaching others to iterate reduces single points of failure and scales the curiosity culture.

## Metrics and signals I track to show progress and influence

Leading indicators are especially important when long-term model improvements take time. I track a mix of leading and lagging metrics so I can show progress even when core model upgrades are distant.

Leading indicators

- Experiment velocity: number of vetted experiments executed per month

- Qualitative hit rate: percentage of experiments that eliminate or reduce a documented failure class

- Time from hypothesis to deployment: how quickly we move from idea to measurable result

Lagging indicators

- Task completion rate by intent cohort

- Reduction in support tickets tied to conversational failures

- Latency and recovery rate for broken flows

Operational signals

- Developer and designer feedback loops: how many improvements originate from cross-functional pairing

- Model regression incidents: frequency and time to mitigation

Reporting these consistently turned ambiguous progress into a narrative that stakeholders could budget and plan around.

## Common pitfalls and how I avoid them

Chasing novelty over user value

AI is intoxicating. New capabilities can distract teams from solving the root user problem. I always return to user impact as the north star. If a new model feature does not improve key task metrics or reduce cognitive load, I deprioritize it.

Overfitting to short-term metrics

A single A B test can be noisy. I require replication across at least two contexts or a sustained effect before recommending large rollouts. This reduces oscillation between competing priorities.

Neglecting safety and trust

Conversational AI can create surprising safety challenges. I embed simple guardrails early: intent-based verification for critical actions, conservative fallback behavior, and clear user affordances that indicate when the assistant is uncertain. These small design decisions protect users and build trust.

Failing to communicate uncertainty

Leaders who pretend to know everything build brittle plans. I present recommendations as hypotheses with confidence levels and potential failure modes. That transparency invites corrective feedback and fosters faster learning.

## Closing reflections

Positioning myself as a learning product manager in conversational AI changed how I lead. It shifted my daily focus from defending a roadmap to curating a discovery pipeline. It made me more credible in stakeholder rooms because I could show a history of experiments, explain why a particular direction made sense, and demonstrate an ability to adapt when new evidence arrived.

If you want to follow this path, start small. Run your first micro-experiment this week. Document what you learn. Share it. Teach someone how to design a better hypothesis. Over time, the compound effect of that practice will do more to establish your leadership than any single roadmap slide.

I am still learning. That is the point. By making learning visible, structured, and actionable, I have built influence, shipped better conversational experiences, and grown a team that is curious, pragmatic, and resilient. If you are shaping products in this space, consider adopting the learning leader posture. It changes not only what you build, but how you lead.

The Learning-Oriented Product Manager: How Continuous Growth Builds Credibility, Resilience, and Impact in AI and Beyond

## Opening introduction

I remember the moment I stopped waiting to be called an expert and started showing up as a learner. That shift changed how I build products, lead teams, and protect my mental bandwidth. It also became the thread that connects a career that began as a project coordinator at a startup agency, moved into system integration at a large telecommunications company, and now finds me contributing to AI-native products at startups.

This article is about leaning into learning as a strategy, not as humility theater. It is for product people who want to build credibility and influence without pretending to have all the answers. It is for those of us who juggle diverse experiences and want to turn that variety into authority. I write from the trenches, with practical routines, frameworks, and observations that have helped me stay effective and sane while learning on the job.

## Why being learning-oriented matters now

The pace of change in product and technology feels relentless. New paradigms, tooling, regulatory shifts, and ethical questions arrive faster than any one person can master. In this environment, playing expert is a liability. A learning-oriented approach is an asset because it lets you adapt, lead, and make better decisions under uncertainty.

Being learning-oriented means three things to me.

- Curiosity as a default. I choose questions over answers. I ask the right stakeholders, I read selectively, and I prototype quickly to validate assumptions.

- Systems for learning. I do not rely on ad hoc discovery. I build routines, feedback loops, and evidence-based practices that scale across teams and time.

- Humility with agency. I openly acknowledge gaps while driving progress. That combination builds trust because people see you improving, not posturing.

## The habits I rely on

Habits are the scaffolding of continuous growth. Here are the daily and weekly routines that have kept me moving forward across very different contexts.

- Micro learning windows. I block 20 to 45 minutes most days for focused learning. That might be reading a paper, following a conversation in a relevant forum, or exploring a new API. Small, consistent investments compound.

- Rapid experiments. I prototype in the cheapest possible way. For AI features that means end-to-end mockups, simple data checks, or conversation scripts before expensive engineering work.

- Reflection notes. After milestones or setbacks I spend 30 minutes writing what worked, what surprised me, and one concrete change for next time. These short notes become a personal knowledge base I revisit when designing future projects.

- Cross-functional learning rituals. I create spaces where designers, engineers, analysts, and product people teach each other one concept per week. Teaching accelerates understanding and spreads context.

- Boundary setting for mental health. I schedule deep work slots and protect them. I set expectations with stakeholders about response times. Protecting headspace is not selfish, it is strategic.

## A framework to build credibility while still learning

When you are new to a domain, credibility does not arrive from claiming mastery. It comes from a consistent pattern of learning, sharing, and delivering value. I use a simple three-stage framework I call Observe, Structure, Deliver.

Observe

- Spend concentrated time listening. Interviews, shadowing, support tickets, and usage data reveal real problems faster than opinions.

- Prioritize signal over volume. Target the highest-leverage sources of insight and document them.

Structure

- Turn observations into a compact model. Map core user jobs, constraints, metrics, and failure modes.

- Share the model early and invite critique. A shared model orients teams and surfaces assumptions that need testing.

Deliver

- Build the smallest useful increment that tests your model. Ship learning as a product of the same rigor you expect of features.

- Measure and iterate. Credibility grows when you translate insight into outcomes, even small ones.

This framework helps me move from novice to trusted practitioner without pretending to be an authority I am not.

## Applying this across different workplaces

Different environments require different tactics. My varied background taught me how to adapt the learning-oriented approach to startups, large corporations, and AI-native teams.

Startups

- Emphasize speed and hypothesis-driven work. In early-stage teams, your credibility comes from rapid, measurable progress.

- Use low-cost experiments to surface product-market fit signals.

Large enterprise

- Focus on systems thinking and stakeholder mapping. Integration projects, especially in telecom or e-commerce platforms, demand clarity about dependencies and risk.

- Invest in clear communication and documentation. Institutional credibility often depends on reliability and consistency.

AI-native teams

- Ground discussions in data and guardrails. For conversational AI, for example, document failure cases, edge scenarios, and acceptable risk thresholds.

- Connect model behavior to user outcomes. Technical metrics are necessary but not sufficient to make product decisions.

Across all environments, the learning-oriented mindset helps me translate domain differences into repeatable patterns of discovery and delivery.

## Practical steps you can start this week

If you are ready to adopt a learning-oriented approach, here are concrete actions I recommend.

- Run one structured listening sprint. Spend a week focusing only on user conversations, support tickets, and usage data. Synthesize three clear hypotheses about user problems.

- Ship one micro experiment. Convert a high-priority hypothesis into the least costly test you can run and measure two outcomes.

- Start a public learning log. Publish short notes on LinkedIn or a newsletter about one insight you tested. This builds credibility through visible curiosity.

- Create a teach-back ritual. Once a month, present one new concept to your team and document feedback. Teaching accelerates internalization.

- Define two boundary rules. For example, no meetings on Tuesdays, and a 24-hour response expectation for non-urgent requests. Protect focused time.

## On mental health, resilience, and career storytelling

My journey includes times when I overextended to prove myself. That pattern cost me energy and clarity. Learning orientation helped me reframe success metrics from appearances to sustainable impact.

Resilience is not grit alone. It is a combination of routines that replenish cognitive capacity and systems that reduce friction. I now prioritize recovery blocks, small rituals to mark the end of the workday, and honest conversations about capacity with managers and peers.

When you tell your career story, frame it as a pattern of learning and contribution. Diverse roles are an asset when you show how each step taught you a transferable skill or perspective. For example, coordinating projects taught me stakeholder empathy, large-scale integration taught me system thinking, and building conversational AI taught me human-centered modeling of language. That narrative is honest and powerful.

## What I still work on

I do not present this as a finished playbook. I continue to wrestle with questions of scale, ethics, and staying curious in moments of success.

- Ethics and product impact. As AI products scale, I work to embed ethical checks into experiments, not as an afterthought.

- Delegation. Learning orientation can tempt me to stay hands-on. I am practicing letting others lead and designing learning opportunities for them.

- Public positioning. I aim to share more process and more failures publicly, not just polished outcomes, to make learning visible.

## Closing reflections

Becoming a learning-oriented product manager is an active choice. It replaces the pressure to perform with an invitation to iterate. It turns diversity of experience into a competitive edge, because being curious across contexts builds pattern recognition and empathy.

If you are navigating new domains or feeling the pressure to always know, try reframing credibility as evidence of growth. Collect experiments, document what you learn, and protect the time that makes high-quality learning possible. Over time that evidence compounds into the kind of authority that people trust: not the authority of certainty, but the authority of consistent, thoughtful progress.

I hope this helps you reimagine expertise as a continuous practice. If you want, I can share a template for the Observe, Structure, Deliver framework or a weeklong listening sprint outline to get started.

How I Build AI-Native SaaS: Practical Product Leadership for AI Product Managers and Founders

## Opening introduction

The shift from embedding models into products to designing products that are AI-native is not incremental. It is transformational. I have spent years building and advising startups at the intersection of SaaS and AI, and the patterns that separate products that merely use AI from products that are defined by AI are clear and repeatable. This matters because the choices you make early about data, evaluation, team structure, and product metrics either enable compounding value or create technical debt and user mistrust that is expensive to unwind.

In this post I want to share a practical roadmap I use with founders and product teams. I write from experience at 11X Ventures and hands-on work with AI tools, focusing on decisions that are actionable, measurable, and aligned with business outcomes. My aim is to help you think beyond model selection and toward product design, adoption, and governance that scale.

## Reframe the problem: product before model

Too often teams start with model architecture or benchmark scores. I flip that script: start with the user problem and the outcome you want to change. AI should be a means to an end, not an engineering vanity project.

Ask four core questions before choosing a model:

- What specific user behavior or business metric will change because of this feature?

- How will I measure that change quantitatively and qualitatively?

- What data is required, and how sensitive or regulated is it?

- What is the acceptable failure mode, and what are the consequences for users?

These prompts focus the team on the product signal rather than the model noise. For example, when I worked on a knowledge retrieval feature for a sales enablement product, we defined success as a reduction in time-to-first-answer for reps and a measurable increase in adoption of the knowledge base. That guided our data strategy, UI design, and retention experiments more than any benchmark score.

## Design for the human in the loop

AI-native products rarely work well if they remove humans entirely. Instead, design for collaboration between machine and human. That means building interfaces that make AI behavior predictable and recoverable.

Key design principles I apply:

- Make uncertainty visible: show confidence scores, provenance, or rationale when it materially affects decisions.

- Offer fast recovery paths: allow users to correct outputs and have those corrections feed back into the model pipeline as supervised signals.

- Preserve control: avoid opaque automation for high-stakes tasks; prefer suggestions and assisted workflows.

A concrete example is a content moderation flow I helped design. We surfaced a brief explanation and a suggested action, and we logged accepts, overrides, and edits. Those events became the highest signal for retraining and dramatically reduced false positives within two iterations.

## Build data infrastructure as a product priority

Data is the raw material of AI-native products. Treat data pipelines, labeling systems, and instrumentation as first-class product features. Early tradeoffs here determine your ability to iterate safely and quickly.

Practices I insist on:

- Version your data and label schemas.

- Instrument every user interaction that could be a training signal.

- Create cheap, high-quality feedback loops to collect corrections and edge cases.

- Use data contracts to clarify ownership, privacy constraints, and retention policies.

At one portfolio company we created a simple in-app labeling widget that surfaced to customer support teams. Within six months the volume of labeled edge cases increased fivefold, and we reduced model error on those cases by over 40 percent. The cost was low because we prioritized in-context labeling over a separate annotation workflow.

## Operationalize evaluation beyond accuracy

Evaluation must be multi-dimensional. Accuracy is necessary but insufficient. I categorize evaluation into three buckets: behavioral metrics, safety and compliance, and operational health.

Behavioral metrics

- Engagement, task completion, and retention are the true signals of product-market fit.

- A model that optimizes an internal metric but reduces trust will harm long-term adoption.

Safety and compliance

- Audit for bias, privacy leaks, and regulatory requirements relevant to your domain.

- Establish clear monitoring for data drift and for outputs that violate policy.

Operational health

- Latency, cost per inference, and failure modes directly affect product margins and UX.

- Track signal quality: number of overrides, manual corrections, and escalation rates.

I instrument these dimensions from day one and include them in weekly product reviews. When teams treat these measurements as optional, they get surprised by hidden costs and user backlash later.

## Align team structure around outcomes

AI-native products require different collaboration patterns. I organize teams to reduce handoffs and increase ownership over outcomes.

Typical structure I favor:

- Product lead owns the user metric and the experiment roadmap.

- ML engineer owns model training pipelines and deployment safety checks.

- Data engineer owns feature stores, data contracts, and instrumentation.

- Design and research own the human trust layer and evaluation of UX for failures.

Cross-functional squads with a shared metric reduce blame and speed iteration. I also set clear escalation paths for safety incidents so that non-engineers can trigger rollbacks when user harm is detected.

## Pricing, go-to-market, and productization strategies

AI features often feel magical, and that creates pricing and packaging opportunities. But monetization must be grounded in measurable value and predictable costs.

Guidelines I use:

- Price for value, not compute. If a feature saves time, translate that into minutes or dollars saved per user.

- Introduce tiered features: basic assistive features in core plans, advanced automated workflows in premium tiers.

- Communicate limitations and expected behavior upfront to set the right expectations.

I coached a startup to add a usage-based premium for advanced automation. By framing it around minutes saved per user and providing a clear ROI calculator, they increased ARPA without a spike in complaints.

## Governance, safety, and regulatory readiness

Building AI at scale requires preparing for scrutiny. Governance is not just legal compliance; it is a trust-building mechanism with customers and partners.

What I implement early:

- Model cards and data lineage documentation for internal and external audits.

- Risk assessment templates that map features to potential harms and mitigation plans.

- Playbooks for incident response that cover user communication, rollback, and postmortem actions.

When regulators or enterprise customers ask for evidence of controls, the teams with these artifacts win trust and contracts faster. Governance accelerates adoption when it is practical and integrated, not bureaucratic.

## Iterate quickly with rigorous experiments

Speed matters, but speed without rigor is dangerous. I marry rapid experimentation with robust guardrails so teams can learn safely.

Experiment checklist I use:

- Define a primary outcome metric and a clear hypothesis.

- Limit exposure with feature flags and canary rollouts.

- Capture qualitative feedback from power users during early rollouts.

- Revert or iterate based on both quantitative and qualitative signals.

A small experiment that changed product direction for me involved switching ranking signals for a recommendations engine. The initial A B test improved click-through but reduced session length. By digging into qualitative feedback we learned the change increased noise. Reverting and redesigning the UI improved both CTR and session time in the next iteration.

## Practical toolkit and quick wins

If you are leading an AI product today, here are immediate actions that pay off quickly:

- Instrument three core signals: task completion, override rate, and time-to-value.

- Build a lightweight feedback capture inside the product that surfaces corrections to the data pipeline.

- Run a red team session that focuses on real customer scenarios, not abstract attacks.

- Draft a one page risk map for each AI feature showing harm vectors and mitigations.

These moves are low cost but shift your team toward evidence-based iteration and safer deployments.

## Parting perspective on building for the next wave

The next decade will reward teams that treat AI as a product discipline, not just an engineering challenge. That means investing in data, human centered design, evaluation, and governance in equal measure. It means aligning incentives across product, ML, and data teams and making decisions that prioritize long-term trust and measurable outcomes.

I am optimistic. When product leaders ask the right questions and make deliberate tradeoffs, AI becomes a force multiplier that creates sustainable differentiation. If you are building AI-native SaaS, focus on the interplay between human behavior and model behavior, and design systems that learn from real users in ways that are transparent and accountable.

## Closing note for builders

Every AI feature is an experiment on your customers. Treat experiments like promises: design them to be informative, safe, and respectful of user time and trust. When you do that, you will not only ship better products but also build durable businesses that users choose to rely on.

Balancing Present and Future in Product Strategy

In the dynamic field of product management, I often find myself at the crossroads of immediate demands and future aspirations. My journey began as a project coordinator, where I realized the significance of bridging engineering, design, and business teams to create a coherent product vision. It was during this time that I developed a passion for not just coordinating resources but understanding the intricacies of product development. Transitioning into a product manager role, I've learned the invaluable lesson that aligning cross-functional teams toward a shared goal is pivotal for success.

One of the key themes that have emerged in my career is the necessity of balancing immediate tasks with long-term product strategy. While my team is engrossed in current projects, I strive to maintain a forward-thinking perspective, ensuring that our efforts today build towards a sustainable future. It’s vital for product managers like myself to keep an eye on industry trends and predict what the future might hold. This strategic foresight enables us to ensure that the innovations and solutions we create remain relevant and add value over time.

Another essential aspect of my role is establishing thought leadership. In an environment where product managers often cannot showcase traditional portfolios, my aim is to build credibility through insights and innovations. By sharing thoughtful content and engaging with communities both online and offline, I am committed to contributing meaningful discourse to the field of product management.

Looking ahead, my long-term goal is to assist early-stage startups in growing their product offerings while also mentoring the next wave of product managers. This dual focus not only allows me to share my experiences but also learn from the fresh perspectives of those entering the field. It is through this exchange of knowledge that I believe we can push the boundaries of product innovation.

In conclusion, my journey has been one of continuous learning and adaptation. By fostering a balanced outlook, I aim to lead teams that are not only efficient in the present but also prepared for the challenges of tomorrow. If these insights resonate with you, feel free to connect and explore potential synergies in transforming ideas into impactful products.

How I turn weeks of B2B market research into days with LLMs

In traditional B2B sectors, the signal is buried under PDFs, paywalled reports, and scattered anecdotes. As a product manager and AI power user, I now treat large language models as my research copilot. The result is a workflow that compresses weeks of ramp-up into days without sacrificing rigor, and in a recent project it helped me deliver an on point, expert vetted readout in four to five business days.

## My AI research stack

I combine general purpose LLMs with a curated corpus and source tracking. Instead of asking a model to know everything, I feed it the right things. That means assembling filings, analyst notes, policy documents, customer forums, and technical manuals, then using retrieval to ground every synthesis. I tag every claim with sources and confidence so I can audit later.

I also run prompts through reusable templates. One template extracts entities and relationships, another maps buyer roles and incentives, and another surfaces regulatory constraints and switching costs. The model becomes a thought partner that proposes angles I might miss while I decide which paths to pursue.

## The workflow that amplifies judgment

I start with a Decision Backlog, not a topic list. What decision will this research inform pricing, go to market focus, product wedge, or partnership strategy. That narrows the scope and keeps each prompt tethered to an outcome.

Next, I build a staged funnel. First pass is a wide net for context and vocabulary. Second pass clusters the market by use case and job to be done. Third pass pressure tests the clusters with counter evidence. At every stage, I ask the model to show assumptions and contradictions so I can follow up with targeted searches or SME calls.

For synthesis, I use comparative grids the model drafts a table, I validate and edit, then ask for deltas that matter. It flags unusual pricing, non obvious adoption blockers, and workflow dependencies. In my recent project in a traditional B2B vertical I was new to, this loop produced an insight map by day two and a client ready deck by day five.

## Quality control and responsible use

Speed does not excuse sloppiness. I enforce three rules. Ground claims in citations, triangulate with at least two independent sources, and separate facts from hypotheses. When the model extrapolates, I label it as a hypothesis and assign validation steps interviews, surveys, or data pulls.

I also use adversarial prompts to hunt for blind spots. I ask the model to argue against our thesis, list where the analysis could fail, and surface edge cases. Finally, I review with domain specialists. In this project, their verdict was clear the synthesis was on point, and the few gaps we found were easy to close because the structure was already there.

## What clients can expect

Faster ramp up in unfamiliar domains without a drop in depth. Transparent sourcing and clear confidence levels. A defensible point of view that highlights risks and trade offs. Tangible deliverables market map, buyer personas with triggers, problem and workflow analysis, feature wedge candidates, pricing signals, and a learning plan for the next two weeks.

Beyond the deliverables, clients see cost efficiency. What once required a larger team over multiple weeks now takes a focused sprint. And because the process is traceable, it becomes a reusable asset for future research.

Trends I am watching reinforce this approach. Vertical tuned models are getting better at structured extraction from messy documents. Retrieval quality is improving with smarter chunking and metadata. Evaluation frameworks are maturing, making it easier to measure synthesis quality, not just token accuracy. Together, these shifts make AI a credible analyst partner, not a shortcut.

The real story is partnership. I do not outsource judgment to a model, and I do not rely on manual reading alone. I combine them. That is how I moved from zero to credible in a complex B2B domain in days, delivered a narrative the experts respected, and kept the work auditable. If you are weighing how to adopt AI in your research practice, start with decisions, ground your synthesis, and let the model expand your angles while you own the call.

Articles

AI Has Changed How People Search for Information, and Critical Thinking Is Paying the Price

There is a quiet shift happening in how people find and consume information, and it is easy to miss because it feels so seamless. Working through a client project recently, something stood out in the data: click-through rates to individual web pages were declining, even for content that was genuinely useful and well-positioned. The culprit was not poor content quality. It was Google's AI Overview, which now summarizes search results directly on the results page, answering the user's question before they ever visit a single website.

That observation opened up a much larger question. AI has not just changed where people find information. It has fundamentally changed how people search, how they consume what they find, and how much they think critically about it. And just like social media before it, the convenience comes with a cost that most people are not paying close enough attention to.

## The Search Behavior Has Already Changed

Not long ago, searching for something meant typing a few fragmented keywords into a search bar and then digging through a long list of results, one by one. It meant spending hours, sometimes days, reading through materials, extracting relevant points, and synthesizing information into something usable. The process was effortful, but that effort was part of what made it valuable. People built their own understanding by doing the work.

That is largely gone now. The way people input queries has shifted dramatically. Conversational, plain-language questions have replaced keyword strings. With large language models, people go even further, typing out paragraphs of raw, unstructured thought and receiving a coherent, organized response in return. The barrier between a question and an answer has nearly disappeared.

The information consumption flow has changed just as dramatically. AI systems, whether embedded in search engines or accessed directly as language models, now summarize and synthesize for the user. Instead of sifting through a dozen sources, a person receives a distilled answer and a handful of links to explore further if they want to go deeper. The cognitive load of researching has been reduced to a fraction of what it once was.

This is, on its surface, a remarkable efficiency gain. But efficiency is not the same as understanding.

## The Double-Edged Nature of Frictionless Information

Used intentionally, AI-powered search is genuinely powerful. It helps people move faster, focus their research more precisely, and surface relevant information without getting lost in irrelevant material. For product teams, knowledge workers, and anyone who needs to make informed decisions quickly, that is a real advantage.

But there is another side to this. When information arrives pre-summarized and pre-interpreted, it reduces the need for the reader to evaluate, challenge, or question what they are receiving. The critical thinking that used to happen during the search process, the weighing of sources, the noticing of contradictions, the forming of independent judgment, gets bypassed. Over time, that capacity can quietly atrophy.

There is also the issue of source blindness. When an AI surfaces an answer, the origin of that answer is often invisible or overlooked. People accept the output without asking where it came from, whether it is current, or whether it reflects a complete picture. This is a meaningful shift from the old model, where the act of clicking into individual sources at least created some exposure to the origin and context of information.

The parallel to social media is worth sitting with seriously. When social platforms first emerged, they represented an extraordinary democratization of expression. Anyone could share a thought with the world instantly, without the gatekeeping of traditional media. That was genuinely transformative. But the same architecture that opened the door to everyone also opened the door to everything, and over time, people found themselves doom-scrolling through a torrent of content, much of it misleading or low-quality, without the tools or habits to filter effectively. The phrase "amusing ourselves to death," borrowed from cultural criticism, captures something real about what happens when the medium optimizes for consumption over comprehension.

AI-mediated search is not social media, but the structural risk is similar. The easier and more frictionless information becomes to access, the less actively engaged the consumer needs to be. And the less actively engaged they are, the more vulnerable they become to accepting what is given rather than questioning it.

## What This Means for People Building AI Products

For product managers and teams building AI-native SaaS products, this behavioral shift is not just an interesting cultural observation. It has direct implications for how products get designed, how users are supported, and how trust gets built over time.

If users are increasingly arriving at products with information that has already been summarized and filtered by AI, they may be bringing assumptions or conclusions that are incomplete. Designing for that reality means thinking carefully about where in the user journey critical evaluation actually needs to happen, and whether the product is helping or hindering that process.

There is also a responsibility question. Building AI-powered features that further smooth away friction in information consumption is not inherently wrong, but it requires intentionality. The best AI products do not just make things faster. They make users more capable. There is a meaningful difference between a product that answers a question and a product that helps a user develop better judgment. The former is useful in the short term. The latter builds lasting value.

Brand and credibility also need to be rethought in this environment. In the old model, visibility was largely a function of keyword optimization and volume of content. In the AI-mediated model, what gets surfaced and trusted is shaped by something more holistic: the coherence, authority, and consistency of a brand's presence across many signals and touchpoints. A brand is increasingly read by AI the way a person is read by their professional network, not just by what they publish, but by how all of it holds together. That requires thinking in systems, not in individual tactics.

## The Awareness Gap Is Where the Real Risk Lives

The most important thing to understand about this shift is not the technology itself. It is the awareness gap between how AI is changing behavior and how conscious people are of that change happening to them.

Social media's harms were not obvious while people were in the middle of them. They crept in quietly, under the cover of convenience and connection. AI's influence on how people think, search, and evaluate information carries a similar risk of being invisible until the effects are already significant.

The antidote is not to resist AI or to treat it with suspicion. The tools are too useful and too embedded in how work gets done for that to be a practical position. The antidote is conscious use: approaching AI-generated information with the same skeptical curiosity that a good researcher would bring to any source, asking follow-up questions, seeking out primary material, and staying deliberate about when to outsource thinking and when to do it yourself.

Technology evolves faster than behavior, and behavior evolves faster than awareness. Closing that gap, between what AI can do and what people understand about how it is shaping them, is one of the more important challenges of this moment.

Stronger at the Core: Mental Health Lessons I Wish Every Product Manager Knew

Shipping never sleeps. As a product manager in tech, I have carried the weight of decision fatigue, shifting roadmaps, and dependency knots that do not care about work hours. For a long time, I tried to think my way out of stress. It did not work. What finally changed everything was accepting help, slowing down, and building an inner core that no deadline could shake.

## Why PM mental health frays in modern tech

Tech moves faster than human nervous systems. Always-on channels blur off-hours. Dependencies multiply across squads, vendors, and legacy stacks, while accountability stays ambiguous. One incident can rewire a quarter, and macro volatility adds layoffs or budget freezes to the mix. AI has shortened cycles and raised expectations, but it has not reduced uncertainty.

In this environment, PMs become shock absorbers. We translate ambiguity into bets, shield teams from churn, and manage stakeholders who each think their priority should be first. Without intentional guardrails, the role quietly drains our reserves.

## From breakdown to backbone: how I rebuilt

I reached a breaking point and stepped back from my career to heal. Advice like do not take it personally never landed for me, because the work is personal. So I did something different. I committed to therapy, every week, no matter how awkward or slow it felt. I gave myself mercy when progress looked like baby steps, and grace when there was regression.

Over time, small changes appeared before I noticed them. I could set boundaries without shame. I could voice what I needed. I could ask for help early. My presence softened, my judgment sharpened. That inner core became real: I know who I am, and I do not crumble in the face of a hot take or a surprise escalation. The impact at work is tangible. Stakeholders get clarity without drama. Teams get calm direction. Outcomes improve because decisions are no longer fear driven.

## My early warning system

Today I watch for signals before stress becomes a spiral.

- Sleep shifts or weekend dread creep in.

- I reread Slack messages to find hidden meaning.

- Design and PRD reviews feel combative instead of curious.

- I keep tinkering with timelines even when the plan is good enough.

When two or more signals persist for a week, I act. I pause noncritical scope and surface risk explicitly. I book time with my counselor. I tell my manager what I need, whether that is air cover, a decision, or a trade off. I journal for 15 minutes to separate facts, feelings, and fears. The goal is not to be tougher. It is to intervene sooner.

## Team practices that protect outcomes and people

I now design calm into the product system.

- Clear escalation map: who decides, on what data, by when.

- Decision logs: reduce re-litigation and memory wars.

- Focus blocks and office hours: fewer meetings, better thinking.

- Sprint capacity at 80 percent: planned slack absorbs change.

- Post-incident decompression: normalize recovery, not just root cause.

- Async-first habits: crisp briefs, short updates, fewer pings.

We also track leading indicators of thrash. Deployment frequency volatility, bug reopen rate, and context switching between epic families forecast stress on teams and on the PM function. When those spike, we negotiate scope, not people.

These practices are not soft. They are operational risk management. Clients and partners feel the difference. Decisions come faster. Communication is cleaner. The team sustains pace without burning the core.

The most important lesson is simple. Trust your gut early. If something feels off, it probably is. Reach for help before the smoke becomes fire. Build systems that protect your attention and your humanity. Start with one boundary and one ritual this week. Your future self and your team will thank you.

How Non Technical Product Managers Can Thrive in the AI Era Without Drowning in Hype or Losing Their Edge

AI didn’t just add a new tool to the product manager toolkit. It changed the pace of product work, the language teams use to make decisions, and the baseline expectations for what it means to be credible in a room.

For product managers without a technical background, the shift can feel especially sharp. Many have built careers on coordination, strategy, stakeholder alignment, and the kind of emotional intelligence that keeps complex work moving. Then, almost overnight, AI became the loudest conversation in tech. Constant releases. Constant predictions. Constant pressure to keep up.

The challenge is not simply learning a new system. It is learning in an environment where the system keeps changing, where hype travels faster than understanding, and where the fear of being behind can quietly erode confidence.

The good news is that the path to thriving is not reserved for the most technical PM in the org. Some of the most compelling voices in the AI space today are product leaders who started from a non technical base, leaned into focused learning, and turned their strengths in communication and perspective into an advantage.

Test changes

The real challenge is not AI. It is the feeling of being forced to learn everything at once

Product managers have always needed to learn the technology of their domain. That part is not new. What is new is how AI arrived: fast, noisy, and socially amplified.